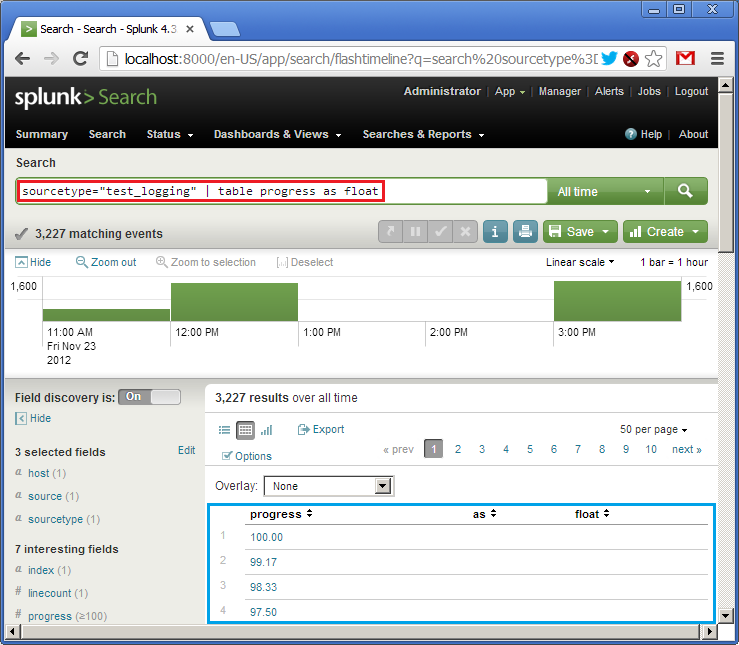

Not: Reverses the effect of the comparison, so "Equals" becomes "Not equals", "Less than" becomes "Greater than or equal to", etc.Ĭhoose a method of comparing the column to the value. Is: Compares the column to the value using the comparator. The available input columns vary depending upon the data source. The columns available are dependent upon the data source selected. (This Property is only available in Advanced Mode)Ĭhoose one or more columns to return from the query. Treat collections as table names, and fields as columns. Manual setup is not usually required, since sensible defaults are assumed. The available parameters are explained in the Data Model. The URL of the Splunk server from which data will be called.Ī JDBC parameter supported by the Database Driver. Users have the option to store their password inside the component however, we highly recommend using the Password Manager feature instead. Insert the corresponding Splunk login password. The available fields and their descriptions are documented in the Splunk Data Model. In most cases, this will be sufficient.Īdvanced: This mode will require you to write an SQL-like query to call data from Splunk. SELECT FROM WHERE < 'DD/MM/YYYY 00:00:00'Īn SQL query in Advanced Mode such as this one enables users to access any data and columns outside the default time range that the user specified when they created the report in Splunk.Īlternatively, users can create a report over all time periods, thus returning all data as a default setting.īasic: This mode will build a query for you using settings from the Data Source, Data Selection, and Data Source Filter parameters. In Advanced Mode, however, users can specify the actual columns by name in an SQL SELECT query. As such, Basic Mode will not return columns for the data if no data exists in the report from the default time range. However, only columns that are present in the default time range are returned. Using a WHERE filter (available in both Basic and Advanced Mode within Matillion ETL), users can specify a time range that will override the default time range, enabling the user to view data from outside the default time range. If, however, the report has no data from the last 30 days, then no data will be returned. This selected time range is used by default when querying the report.įor example, if the chosen time range is 30 days, selecting all data from the report will only actually return the data from within the last 30 days. Note: When a user creates a report in the Splunk portal, the user must select a time range. Do not modify the target table structure manually. Setting the Load Option "Recreate Target Table" to "Off" will prevent both recreation and truncation. Otherwise, the target table is truncated. If the target table undergoes a change in structure, it will be recreated.

Warning: This component is potentially destructive. Each pipeline job is assigned a link to the logs for that job run.The Splunk Query component integrates with the Splunk API to retrieve data from a Splunk server and load that data into a table. Once the pipeline starts running, there is a corresponding job reported in Pipeline Version Details on the HERE platform portal and Pipelines API responses.

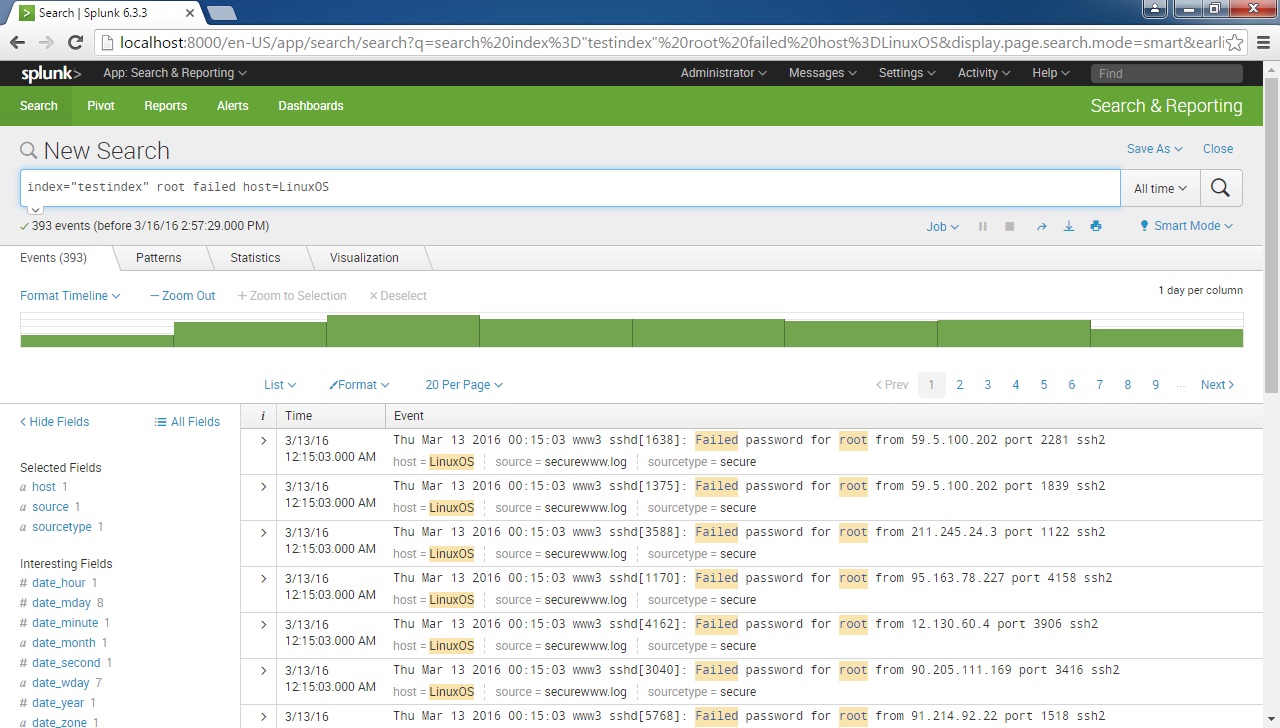

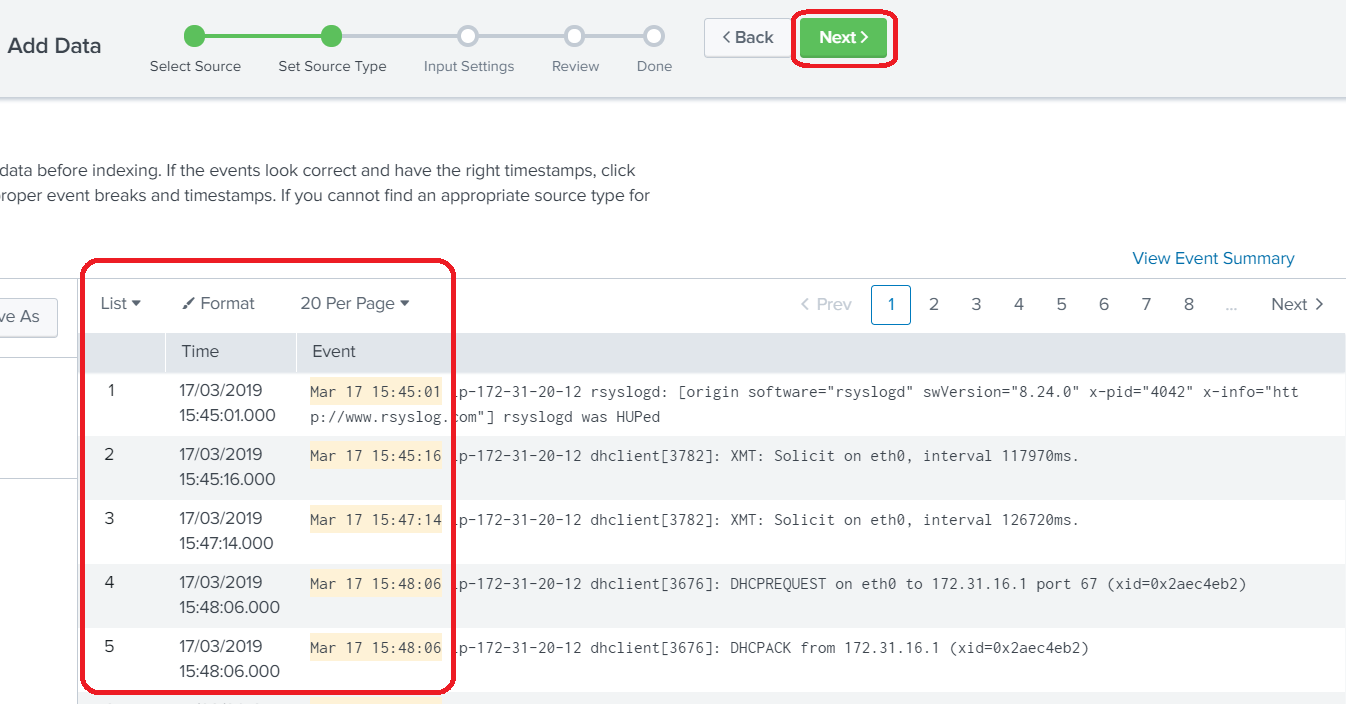

To search your Splunk index for these logs, use the pipeline or pipeline version UUID. Only error logs related to your pipeline can appear in your specific Splunk index. These errors are first captured internally by the platform, and then pushed out to your appropriate Splunk index. Any errors at this point are not attached to a job. There are a number of steps that the pipeline goes through before it actually starts running. Once a pipeline job is submitted, the errors can happen in two different scnearios: before and after the pipeline has started running. Any feedback is provided only through the Pipeline service APIs. You can use application logs to debug and troubleshoot pipeline issues.ĭuring the pipeline setup (before it is submitted to be run), the pipeline service does not produce any logs.

You can search for this by adding the following string to your Splunk search query: index = "olp-example_common" Troubleshoot Pipeline Issues For example, if your account is in the olp-example realm, your index would be olp-example_common. Pipeline logs are stored in the olp- _common index. Any single log line exceeding the allowed limit of 900 KB will be discarded and will not be available in Splunk.įor more information on using Splunk, see the tutorials in the Splunk documentation.įor more information on configuring log levels in a pipeline, see the Pipelines Developer Guide.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed